Problems and Solutions throughout Beta Testing AI Code Generators

AI program code generators are modifying software development by simply automating code composing, enhancing productivity, and reducing errors. On the other hand, beta testing these kinds of sophisticated tools offers unique challenges. This post explores the essential issues encountered throughout beta testing involving AI code generation devices and offers options to overcome them.

Challenges in Beta Testing AI Code Generators

1. Intricacy of Code Top quality Assurance

Ensuring the particular AI-generated code complies with quality standards is actually a significant challenge. AI code generators need to produce code which is not only syntactically proper but also useful, secure, and supportable. Beta testers need to evaluate the code against various benchmarks, which includes performance, scalability, and even adherence to finest practices.

2. Dealing with Diverse Programming Different languages and Frameworks

AI code generators should support multiple programming languages and frames. This diversity brings complexity to the screening process. Ensuring consistent performance and quality across different surroundings requires extensive testing and expertise in various technologies.

several. Integrating with Current Development Workflows

AJE code generators must integrate seamlessly along with existing development workflows, tools, and processes. Beta testers must be sure that the AI tool can become easily incorporated straight into different environments with no disrupting the expansion lifecycle. This involves tests compatibility with type control systems, CI/CD pipelines, and some other development tools.

some. Managing Security plus Privacy Concerns

AI code generators generally require access in order to codebases and repositories, raising security in addition to privacy concerns. Ensuring that the AJE tool does not really introduce vulnerabilities or perhaps expose sensitive details is essential. Beta testers must rigorously examine the security methods and data managing practices in the AJE tool.

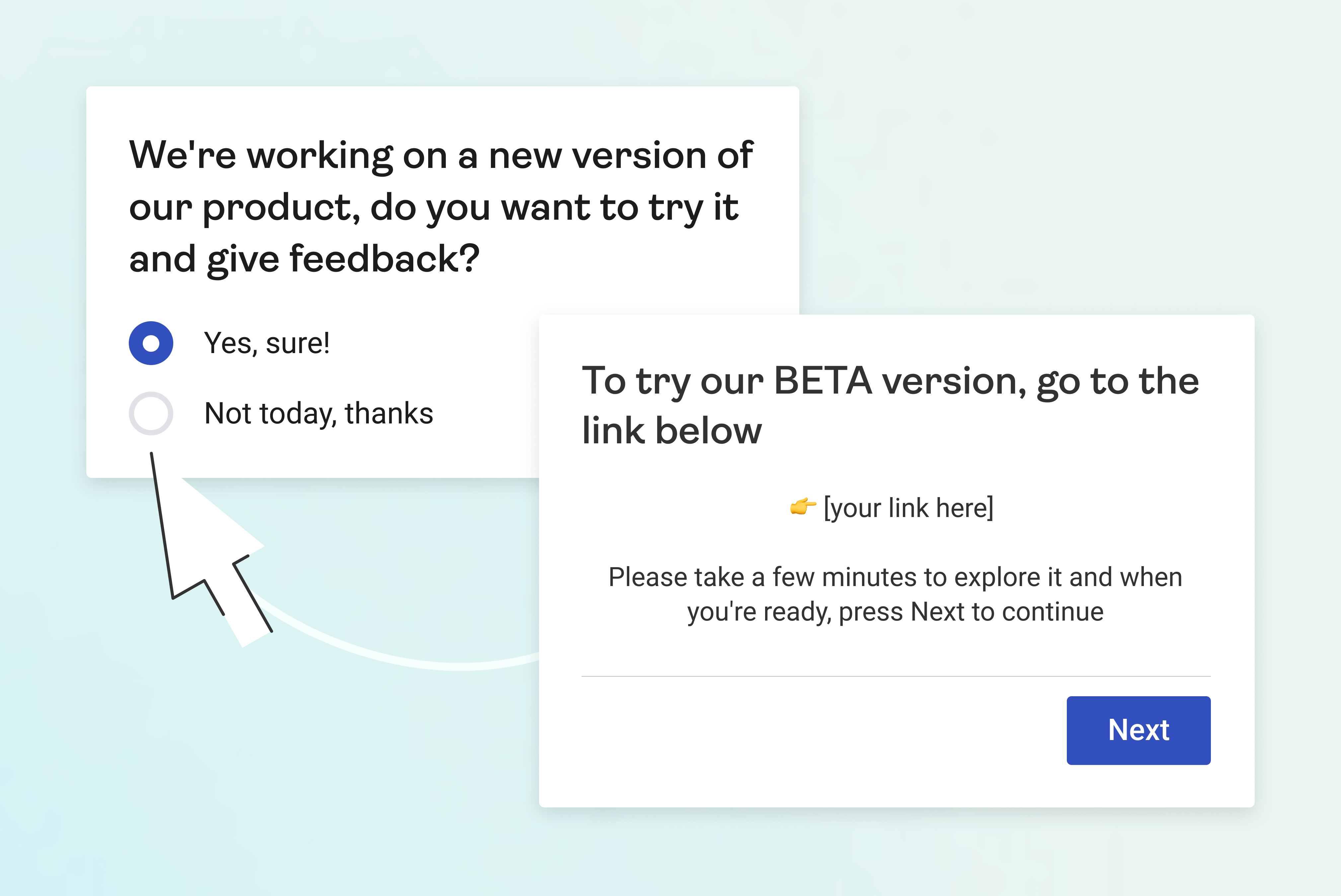

5. User Experience and Adoption

The usability plus user experience of AJE code generators perform a significant position in their ownership. Beta testers need to assess the intuitiveness, simplicity of use, in addition to learning curve linked to the tool. Feedback from your diverse group associated with users is essential to identify and address usability issues.

6. Performance in addition to Scalability

AI signal generators must conduct efficiently and range to handle significant codebases and higher volumes of needs. Beta testers should assess the tool’s efficiency under various circumstances, including stress screening and benchmarking in opposition to real-world scenarios.

Options to Overcome Beta Testing Challenges

just one. Comprehensive Code Good quality Evaluation

Making a strong code quality analysis framework is important. This kind of framework should include automated and manual tests methodologies to assess the particular AI-generated code. Automatic tools can be used to examine for syntax problems, code smells, plus adherence to code standards. Manual evaluations by experienced designers can provide observations into code performance, readability, and maintainability.

2. Standardized Assessment Across Languages plus Frameworks

Creating standard testing protocols regarding different programming foreign languages and frameworks can easily streamline the testing process. This includes building test cases and benchmarks tailored to be able to each environment. Making use of language-specific linters, stationary analysis tools, and performance profilers may help ensure steady quality across different technologies.

3. Smooth Integration Testing

To assure seamless integration, beta testers should produce end-to-end testing surroundings that replicate actual development workflows. This involves integrating the AJE code generator along with version control systems, CI/CD pipelines, and also other essential tools. Automatic integration tests can assist identify and solve compatibility issues early in the screening phase.

4. Demanding Security and Level of privacy Examination

Conducting detailed security assessments is crucial to mitigate risks connected with AJE code generators. This specific includes penetration testing, code audits, in addition to evaluating the tool’s data handling practices. Implementing strict access controls and security protocols can aid protect sensitive information preventing security removes.

5. User-Centric Style and Feedback Loops

Incorporating user feedback in to the development method can significantly improve the usability and even adoption of AI code generators. check my site should include a diverse selection of users, including developers with varying amounts of expertise. Regular suggestions loops, usability assessment sessions, and customer surveys can help identify pain details and areas for improvement.

6. Performance Optimization and Scalability Screening

Performance optimization should be a continuous process during beta screening. This involves stress assessment, load testing, and even benchmarking the AJE code generator underneath different conditions. Identifying bottlenecks and customizing the underlying algorithms plus infrastructure can enhance the tool’s efficiency and scalability.

Situation Study: Beta Screening an AI Program code Generator

To demonstrate the beta tests process, consider some sort of hypothetical AI signal generator designed to be able to automate JavaScript code writing. The beta testing team looks several challenges, including ensuring code quality, integrating with well-liked JavaScript frameworks, plus addressing security issues.

Initial Setup and Test Preparing

The particular team starts by setting up a comprehensive check plan, defining the particular scope, objectives, in addition to success criteria with regard to the beta testing phase. They identify key areas to focus on, including code quality, incorporation, security, usability, in addition to performance.

Code High quality Evaluation

Automated resources like ESLint plus Prettier prefer examine the syntactical correctness and style adherence in the generated signal. Manual code evaluations by experienced JavaScript developers provide ideas into code effectiveness and maintainability.

Integration Assessment

The staff tests the AJE tool’s compatibility along with popular JavaScript frames like React, Slanted, and Vue. They will create sample tasks and integrate the AI-generated code directly into existing workflows in order to identify and resolve any compatibility concerns.

Security Assessments

Thorough security assessments are usually conducted to assure the AI application does not expose vulnerabilities. Penetration assessment and code audits help identify possible security risks. Info handling practices usually are evaluated to assure compliance with level of privacy regulations.

User Opinions and Usability Assessment

A various group regarding JavaScript developers is usually involved in the beta testing process. Regular feedback periods and usability screening help identify soreness points and areas for improvement. The development team iterates on the tool based on user feedback.

Performance and Scalability Testing

Tension testing and load testing are executed to evaluate the tool’s performance underneath different conditions. They identifies bottlenecks plus optimizes the tool’s algorithms and facilities to improve scalability.

Realization

Beta assessment AI code generators can be a complex procedure that will need a extensive approach to deal with various challenges. By simply focusing on signal quality, integration, safety, usability, and overall performance, beta testers can easily ensure the development of robust and reliable AI tools. Incorporating user feedback and continuous search engine optimization are crucial for that successful adoption involving AI code generator in real-world advancement environments. As AJE continues to progress, effective beta screening practices will enjoy a pivotal role in shaping the future of software program development.